San Francisco, London- A team of US researchers has developed a new technique allowing doctors to understand the patient’s facial expressions, through a robot that can express pain. This will definitely help doctors in diagnosing disease and evaluating the level of pain felt by patients. Yet, it’s a difficult skill to be practiced and learned by doctors.

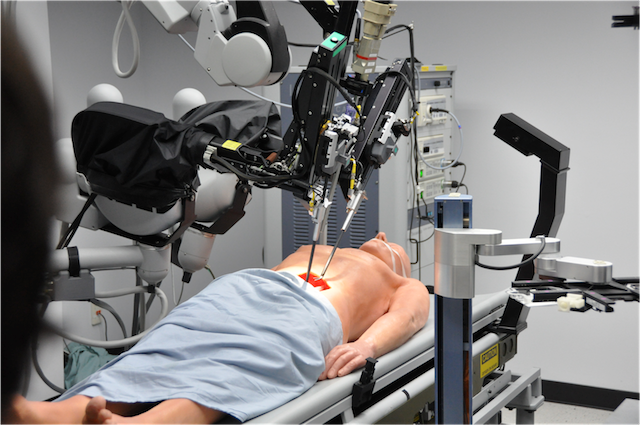

Many doctors already use robotic patient simulators in their training to practice procedures and test their diagnostic abilities. “These robots can bleed, breathe and react to medication,” says Laurel Riek at the University of California, San Diego. “They are incredible, but there is a major design flaw – their face.”

Patient simulators usually have static faces, often with an open mouth so doctors can practice checking airways. This means that, unlike a real patient, they show no emotion.

To change this, Riek and her team have given a robotic face the ability to make expressions of pain, disgust and anger, to help emulate realistic patient feedback.

“Interpreting a patient’s facial expressions can help determine if they are having a stroke, are in pain or are having a reaction to medication, so doctors need to be able to do this from day one,” Riek told the New Scientist website.

The researchers collected videos of people expressing pain, disgust and anger, and used face-tracking software to convert their expressions into a series of moving points. They then mapped these onto the robot face with real human features, and skin made of rubber.

Researchers think that robots that are able to express feelings can actually help doctors to better diagnose the patient’s pains. These robots will be beneficial for students studying physical symptoms and reading facial expressions.

Priscilla Briggs, a software engineer at Google said that this technique could be used very soon to better train our medical professionals and improve patient outcomes; but further work will be required to show that a robot’s expressions can improve clinicians’ performance, she says.